March 16, 2026

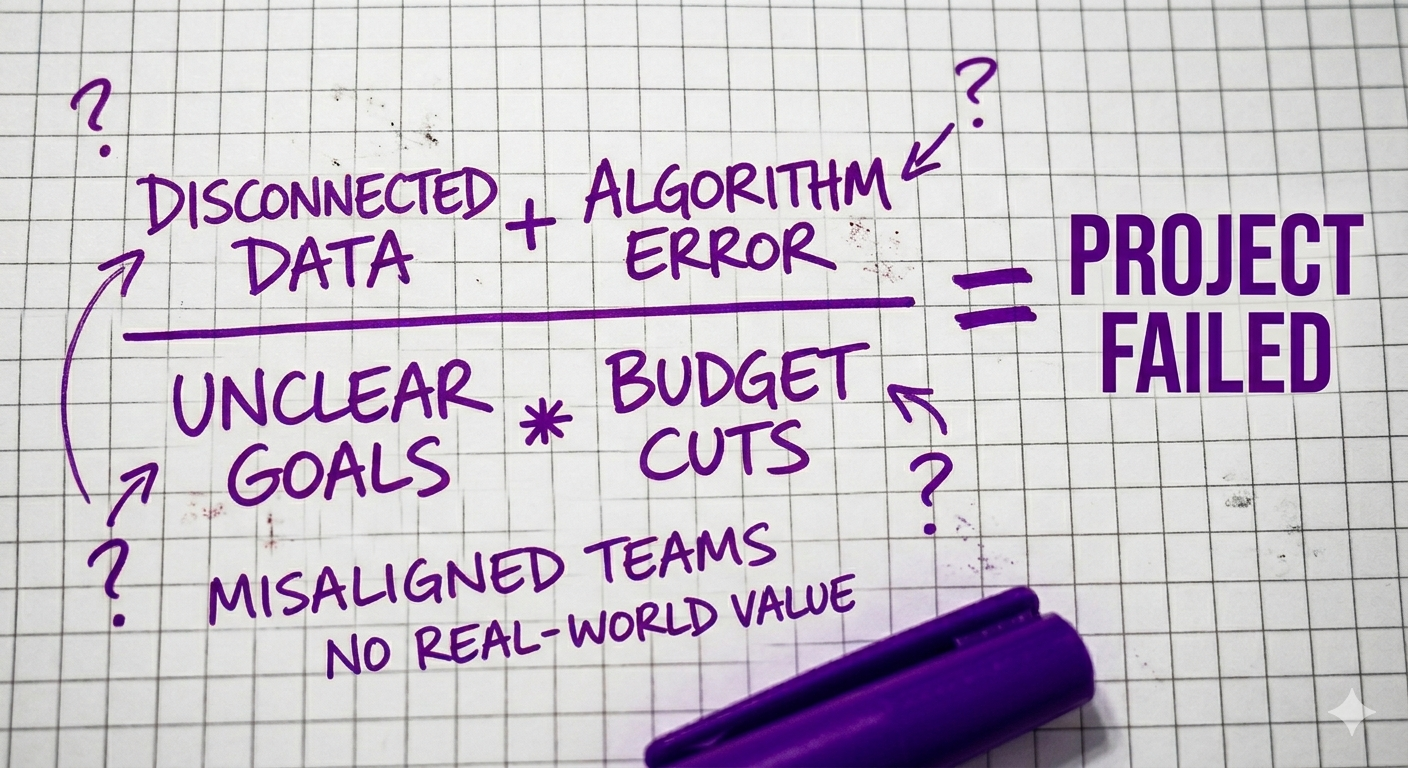

Most AI projects don't fail because the technology breaks. They fail because the organization around the technology wasn't set up for what the project actually required.

This distinction matters more than any failure statistic. If the problem were purely technical, better engineers or a better model would fix it. But the pattern across dozens of post-mortems, industry surveys, and our own client engagements is consistent: the model usually works. The organization usually doesn't.

In 2024, the RAND Corporation published a research report based on structured interviews with 65 experienced data scientists and engineers. They identified five root causes of AI project failure: miscommunication about the problem, inadequate data, technology chasing, underinvestment in infrastructure, and attempting problems too difficult for current AI. It's worth noting what RAND didn't find: none of those are model failures. They are organizational failures. (The report is also transparent about its limits. The interviewees were mostly non-managerial engineers, which means the findings may skew toward identifying leadership problems. That's a useful lens, but not the only one.)

McKinsey's November 2025 global survey of nearly 2,000 participants across 105 countries found that 88% of organizations now use AI in at least one business function. But only about 6% qualify as "high performers," meaning they attribute meaningful financial impact to AI. The rest are using AI. They just aren't getting results from it.

That gap, between adoption and impact, is where these seven mistakes live.

AI gets approved by a committee but owned by nobody.

This is the most common organizational failure pattern, and the one most likely to be dismissed as "soft" or "political." It isn't. It's structural. When a project doesn't have someone with P&L authority who owns the business outcome, not just the delivery timeline, decisions stall. Data access requests sit in queues. Scope questions get punted. The engineering team makes design choices without business context because there's no one to ask.

McKinsey's data shows that AI high performers are about three times more likely to report strong senior leadership ownership. That finding is consistent with what we see in practice: the single biggest predictor of whether an AI project delivers value is whether someone with decision-making authority stays engaged past kick-off. Not "sponsors the project in a leadership meeting." Stays engaged. Reviews progress. Makes trade-off decisions. Unblocks the team when they hit organizational friction.

The failure mode is predictable. The project launches with energy. The sponsor gets pulled into other priorities. Weeks pass. The team builds something nobody asked for because nobody was in the room to course-correct. Three months later, someone asks for a status update and the answer is complicated.

Diagnostic questions:

Engineering builds the model. Nobody redesigns the workflow it's supposed to improve.

This is the mistake that creates the most expensive failures, because everything looks like it's working until you try to deploy. The model performs well in testing. The accuracy metrics are good. The demo is impressive. Then you discover that the business process the AI was designed to improve doesn't actually work the way anyone assumed, that nobody retrained the team that's supposed to use the output, that the CRM routing logic wasn't updated, that the escalation rules still point to the old manual process.

The AI works. The organization ignores it.

McKinsey's 2025 survey tested 31 organizational variables. Workflow redesign had one of the strongest correlations with whether an organization saw enterprise-level financial impact from AI. Not model sophistication. Not data quality. Workflow redesign. The organizations capturing value redesigned the process before or alongside building the model. They asked: if this AI works perfectly, what changes in how humans do their jobs? And they made those changes.

Most organizations skip that question entirely. They treat AI as something you bolt onto an existing process. The process stays the same. The AI output goes into a dashboard nobody checks.

Diagnostic questions:

Teams pick a foundation model before confirming they have the data to support the use case. Then they spend months on data cleanup they didn't budget for.

RAND's researchers identified inadequate data as one of the most common root causes of AI project failure. One interviewee in their study made a point that captures the pattern well: business leaders often believe they have good data because they receive regular reports, without realizing that the data behind those reports may not be suitable for training a model.

Industry estimates vary, but data preparation routinely accounts for 20% to 50% or more of total AI project cost or effort, depending on the organization's data maturity. Most teams don't budget for it as a separate line item. It's buried inside "development," which means it surfaces as a timeline slip, not a planned phase.

The pattern unfolds like this: a team selects a model and begins development. Weeks in, they discover the data is fragmented across systems, inconsistent in format, or missing key fields. They pivot to data cleanup. The timeline stretches. Budget tightens. Leadership starts asking uncomfortable questions. Six months later, the project is still preparing data, and nobody has built the thing they set out to build.

This doesn't mean you need perfect data before starting. It means you need to know what your data looks like before you commit to a timeline and a budget.

Diagnostic questions:

The existing dev team "learns AI" on the job. The first three months produce nothing deployable because the learning curve is steeper than expected.

There's a real difference between a strong software engineer and an engineer who has shipped AI systems to production. The skills overlap substantially, but the gaps matter. Data pipeline architecture. Model evaluation and testing. Prompt engineering. Retrieval-augmented generation. Monitoring for model drift over time. These aren't skills a senior backend developer absorbs in a few weeks of tutorials, no matter how talented they are.

RAND's report noted that underinvestment in specialized infrastructure roles, particularly data engineers and ML engineers, significantly increases the time required to develop and deploy AI models. This is a fair observation, not a criticism of general engineering talent. Building production AI is a specialty, the way building distributed systems or writing compilers is a specialty. Expecting generalists to pick it up immediately is an organizational planning failure, not a reflection of their ability.

The failure mode: a well-intentioned team spends weeks evaluating frameworks, running tutorials, experimenting with approaches they haven't used before. They're learning, not building. By the time they produce something, the business has lost patience or the budget has thinned. Nobody did anything wrong. The timeline was wrong.

For most companies at this stage, a fractional team with AI production experience working for four to twelve weeks will get you further than a permanent hire who spends months ramping up.

Diagnostic questions:

The project starts with a vague brief, "add AI to the product," and scope creeps until budget is exhausted.

This failure pattern should be familiar to anyone who has managed a software build. It's also the one most likely to repeat with AI, because AI projects tend to carry even more ambiguity than traditional software. The capabilities feel open-ended. The technology feels magical. The brief reflects that: broad, aspirational, under-defined.

"We want to use AI to improve customer experience." What does that mean? Which customers? Which touchpoint? What does "improve" look like? How will you measure it? These questions feel slow when everyone is under pressure to show progress. So nobody asks them before development starts.

The result: the team builds something. Leadership reviews it. It's not what they had in mind. Scope adjusts. Then adjusts again. Budget burns. The project either gets killed or ships late, over budget, and underwhelming.

What's missing in this pattern is not a better engineering team. It's an independent reference point. A structured estimate, completed before development begins, that forces clarity about what's being built, what it costs, and what assumptions are being made. That reference point shouldn't come from the vendor building the project, because their incentives aren't aligned with yours on this question.

This is the gap the Fraction estimator was built for. Feed it a product brief. It returns a structured breakdown by feature area, with story point ranges and assumption flags. It doesn't replace a vendor quote. It gives you a baseline before you negotiate one, so ambiguity becomes a known variable, not a hidden cost.

Diagnostic questions:

The proof of concept works in a notebook. Nobody planned for deployment, monitoring, or maintenance. The pilot "succeeds" and then nothing happens.

This is the most frustrating failure pattern because it often follows genuine technical success. The prototype works. Stakeholders are excited. Someone builds a slide deck showing the projected impact. Then the project stalls, because nobody budgeted for the bridge between "it works on my laptop" and "it runs in production, at scale, with error handling and monitoring."

That bridge is not a small gap. It includes infrastructure provisioning, security review, integration with existing systems, load testing, building monitoring dashboards, alerting for model degradation, and planning for how the model gets updated over time. These steps are not optional. They're the difference between a demo and a product. And they're often more expensive and time-consuming than building the prototype was.

S&P Global's 2025 survey reported that the average organization scraps about 46% of its AI proof-of-concepts before they reach production. That number is worth pausing on. It's not that half of AI ideas are bad. It's that half of the ideas that were good enough to prototype didn't have a viable path from pilot to production.

To be fair, not every pilot should become a production system. Some experiments should fail. That's the point of experimenting. But too many organizations treat the pilot as the finish line rather than the halfway point, then act surprised when nothing ships.

Diagnostic questions:

The team reports on model accuracy and sprint velocity. Nobody tracks whether the AI actually moved a business metric.

This is the mistake that lets every other failure hide behind good-looking dashboards.

An AI team reports 92% accuracy. That sounds good. But accuracy on what? Against which dataset? And does that accuracy translate to something the business cares about? A model that predicts customer churn is worthless if nobody acts on the predictions. A content generation tool that produces clean output is irrelevant if nobody uses it because it doesn't match the brand voice.

McKinsey's 2025 survey found that only about 39% of organizations report any enterprise-wide earnings impact from AI, despite 88% saying they use it. Many of the organizations in the gap between "using AI" and "seeing impact" have teams actively reporting on technical metrics, sprint progress, and model performance. The activity is real. The business impact is not.

The fix requires discipline more than technology: define the business metric before you start building. Not "improve accuracy." Something like: reduce average support ticket resolution time by 20%. Increase first-pass yield by 5%. Cut proposal generation time from six hours to one. If the AI project can't be connected to a metric like this, it's an experiment, not a project. There's nothing wrong with experiments, but you should fund them like experiments, not like product development.

Diagnostic questions:

If three or more of these patterns describe your current AI initiative, the project doesn't need more engineering hours. It needs a reset.

That doesn't mean starting over. It means stepping back far enough to answer the questions that were skipped the first time. What problem are we actually solving? What does success look like in business terms? Is our data ready? Is our team right for this scope? Is there a clear path from pilot to production? These are scoping questions. They should have been answered before development started. Answering them now is still cheaper than continuing to build in the wrong direction.

The organizations that recover fastest tend to have one thing in common: they stop asking "how do we fix the model?" and start asking "did we define the problem correctly?"

The answer, in most cases, is no.

All seven mistakes trace back to the same root cause: the organization treated AI as a technology purchase instead of a business change. They expected the tool to create value on its own. It didn't.

AI projects succeed when someone with authority owns the outcome. When the workflow is redesigned before the model is built. When data readiness is assessed before the timeline is set. When the team has production experience. When the scope is defined and estimated independently. When there's a real plan for deployment and maintenance. When success is measured in business terms, not technical ones.

None of that is about the technology. All of it is about how the organization makes decisions.

If you're evaluating an AI initiative and want an independent read on scope, cost, and complexity before committing budget, the Fraction project planner gives you a structured estimate in minutes. It won't tell you whether your organization is ready. But it will tell you what you're actually buying, which is more than most vendors will offer before you sign.