March 15, 2026

Everyone wants an AI agent. Few people can describe what that means.

"Agentic AI" became the phrase vendors attach to almost anything, from a chatbot with a slightly better prompt to a genuinely autonomous system executing multi-step workflows. Gartner projects that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. The demand is real. But for a non-technical operator trying to evaluate whether your business needs custom AI agent development, and what it should cost, the current landscape is almost impossible to read.

This article cuts through that. It explains what AI agents actually are, how they differ from each other, what each type costs to build, and when building custom makes more sense than buying off-the-shelf.

A chatbot responds to input. An agent pursues a goal.

That's the simplest useful distinction. A chatbot takes a message and returns a message. An AI agent takes a goal, decides what actions to take to achieve it, executes those actions using tools, and adjusts based on the results. It can call APIs, read and write files, search the web, send emails, query databases, or trigger other software systems.

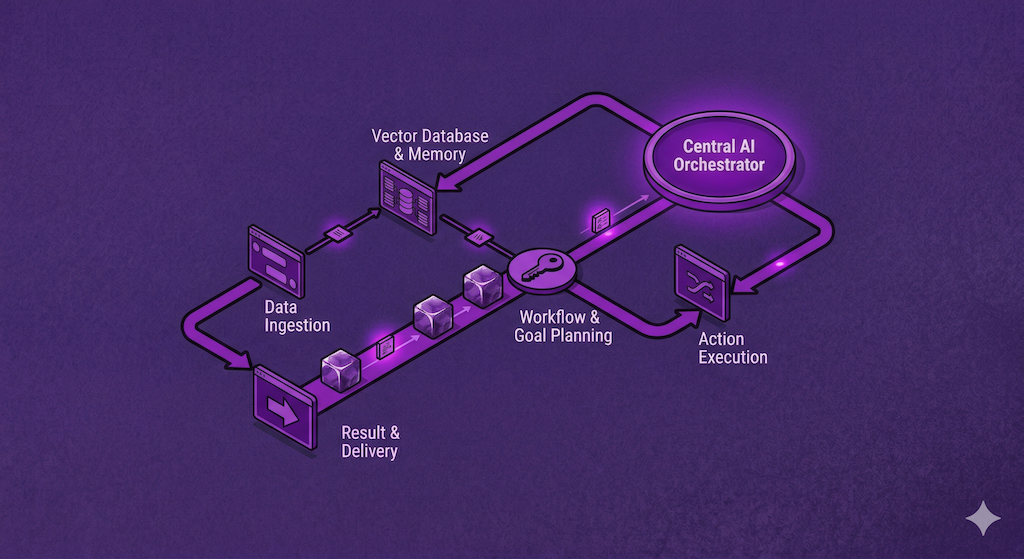

The agent uses a large language model (LLM) as its reasoning engine, but the LLM is not the product. The product is the loop: perceive, reason, act, observe, repeat.

IBM's technical documentation describes this cycle well: an agentic system collects data from its environment, reasons over it, sets objectives, selects an action, executes it, and then evaluates the outcome to improve future decisions. That loop is what separates an agent from a model.

What makes an agent an agent is goal-directed behavior plus tool use. Remove either one, and you have something simpler.

One distinction worth holding onto: unlike standard AI models that operate within fixed constraints and require human intervention at each step, agentic systems are designed for autonomy across sequences of decisions. The term "agentic" refers directly to this capacity to act independently and purposefully, not just to respond.

Not all agents are the same in complexity, risk, or cost. Most conversations about custom AI agent development collapse because buyer and vendor are picturing different tiers.

Here's a working taxonomy.

What it is. An agent with one tool, one task, one loop. It takes input, calls a tool, returns output. "Summarize this document and extract the action items." "Classify this customer support ticket and route it."

Build stack. LLM selection (OpenAI, Anthropic, Gemini, or open-source depending on latency and cost needs). One tool integration (file reader, API call, database query). A prompt chain with basic input/output schema. Minimal state, no memory across sessions. Guardrails: output validation, error handling, rate limiting.

What's harder than it looks. Output reliability at scale. A single-tool agent that works 90% of the time in demos fails operationally when it's processing 500 documents a day. Production hardening often takes more engineering time than the initial build. This is consistent with what Gartner flagged when it predicted at least 30% of generative AI projects would be abandoned after proof of concept by end of 2025, citing poor data quality, escalating costs, and unclear business value.

Story points: 20 to 40 | Estimated cost: $3,000 to $6,000 | Timeline: 2 to 4 weeks

This is the right tier for well-scoped internal automation: a document classifier, a data extractor, a simple intake router.

What it is. An agent that executes a sequence of steps to complete a more complex goal. "Research this company, summarize the findings, draft an outreach email, and flag it for human review before sending." Each step depends on the previous. The agent manages state across the sequence.

Build stack. LLM (usually higher capability than Tier 1, because multi-step reasoning increases error compounding risk). Multiple tool integrations (web search, CRM API, email, document store). State management across steps. A planning layer: either a simple chain-of-thought loop or a structured planner (ReAct, LangGraph, or similar). Human-in-the-loop checkpoints for compliance-sensitive environments. Eval framework to measure whether the agent's output is correct.

What's harder than it looks. Error recovery and branching. When step 3 fails because the CRM API returned unexpected data, what does the agent do? Writing robust failure logic for every fork is the part that takes real engineering time and is almost always underestimated in early quotes.

Story points: 60 to 120 | Estimated cost: $9,000 to $18,000 | Timeline: 4 to 8 weeks

This tier is appropriate for knowledge work automation where sequence matters: research workflows, proposal generation, client intake, compliance documentation.

What it is. Multiple specialized agents working in coordination. A planning agent breaks a goal into subtasks. Specialist agents execute each subtask. A review agent checks outputs before they exit the system. The whole thing runs in parallel where possible.

This is what IBM describes as an agentic architecture with a "conductor" model overseeing tasks and decisions while supervising simpler, specialized agents. The orchestration can be vertical (hierarchical, with one agent directing others) or more horizontal, with agents collaborating as equals. Each model has tradeoffs: hierarchical systems are faster but prone to bottlenecks. Decentralized systems are more resilient but harder to debug.

Build stack. Agent orchestration layer (LangGraph, AutoGen, custom orchestrator). Separate agents for separate domains, each with their own tools, prompts, and state. Message passing and coordination logic between agents. Persistent memory and session management. Evaluation at both the agent level and the system level. Security controls: agent permissions, rate limiting, audit logging. Deployment infrastructure: this is not running on a laptop.

What's harder than it looks. Emergent failures. When four agents are coordinating on a workflow, bugs become non-linear. Testing a single agent is manageable. Testing interaction effects across a multi-agent system requires a different approach entirely. This is where underfunded builds fail silently.

Story points: 150 to 300+ | Estimated cost: $22,000 to $45,000+ | Timeline: 8 to 16 weeks

This tier is for complex, high-volume operational workflows: insurance underwriting pipelines, multi-source research platforms, autonomous operations monitoring.

Before you spec a custom agent, answer this question honestly: does an off-the-shelf platform already do what you need?

Platforms like Zapier, Make, n8n, and Notion AI have added agentic features. Microsoft Copilot, Salesforce Einstein, and HubSpot AI are building deeply into their existing toolchains. If your use case lives inside one of those ecosystems, custom development may not be the right answer. Not yet.

Build custom when:

Your data or workflow doesn't fit a standard platform's model. You need the agent embedded in a proprietary system (your app, your portal, your API). Compliance or data residency requirements rule out third-party platforms. The workflow is complex enough that no-code tools break down at the edges. You need the behavior to be configurable, not just the inputs.

Buy (or configure) when:

The use case is generic: document summarization, basic routing, simple Q&A. Speed to deployment matters more than customization. You're testing whether the use case creates value before committing to build.

The mistake operators make is building Tier 3 when Tier 1 would prove the concept. And the mistake vendors make is quoting Tier 3 when the buyer asked for something much simpler.

These are the line items that turn a $9,000 orchestrated agent into a $22,000 one:

LLM API costs at scale. The agent itself may cost $12,000 to build. But if it processes 50,000 requests a month using GPT-4o, the monthly API bill is real and ongoing. Get an estimate before you commit.

Evaluation infrastructure. You cannot ship a production agent without a way to measure whether it's working correctly. Eval frameworks, golden datasets, and regression testing add scope that most first-time buyers don't include in their initial brief.

Human-in-the-loop workflows. If your use case requires human review before the agent acts (common in healthcare, legal, financial services), you're building an approval interface, a notification layer, and a correction mechanism. Each adds story points.

Integration complexity. Calling a clean REST API is simple. Integrating with a legacy CRM that has inconsistent data models is not. The more systems the agent touches, the higher the variance on the estimate.

Compliance and audit requirements. If every agent action needs to be logged, explainable, and auditable, that adds infrastructure. It's not optional in regulated industries.

An AI agent is not a static piece of software. LLM providers update models. APIs change. Prompts that work today drift as the underlying model is fine-tuned. Output formats change. Tool schemas change.

For a production agent, budget roughly 10 to 20 percent of initial build cost per year for maintenance, monitoring, and prompt tuning. A $15,000 orchestrated agent might cost $1,500 to $3,000 per year to keep reliable.

Buyers who skip this budget tend to find out about it six months after launch when the agent starts returning degraded output and no one knows why. This is one of the reasons RAND's research on AI project failures emphasizes that organizations need to invest in infrastructure for model deployment and ongoing governance, not just the initial build.

This is the part most vendor conversations skip.

Agentic systems are powerful precisely because they act autonomously across multiple steps. That autonomy is also where risk concentrates. If the goal is poorly defined, or the reward signal is misaligned, the agent will optimize for something you didn't intend.

IBM's technical overview flags several realistic failure modes: an agent optimizing for engagement that surfaces misleading content, a trading agent that takes on excessive risk in pursuit of returns, a content moderation agent that over-censors legitimate discussion. None of these are hypothetical. They're the predictable result of deploying autonomous systems without clearly defined success criteria and feedback loops.

For operators building agents in regulated industries, the implication is direct: guardrails are not optional. They are part of the build. Budget them accordingly.

The single biggest driver of estimate variance is a vague brief. "We want an AI agent to help our sales team" produces a range so wide it's useless.

A scopeable brief answers:

What specific goal does the agent pursue? What systems or data sources does it need to access? What does "done" look like for a single successful run? Who reviews the agent's output before it acts, and in what scenarios? What happens when the agent fails or produces wrong output? How many requests per day, and what's the latency requirement?

These are not technical questions. They're operational ones. Answering them before you approach a vendor changes the conversation entirely.

Fraction builds AI agents across all three tiers. But before a single line of code is written, we scope the build, define the assumptions, and produce a structured cost estimate with story point ranges by component: agent architecture, tool integrations, eval infrastructure, human-in-the-loop flows, deployment.

The Fraction Instant Project Estimator can take a product brief for an AI agent and return a breakdown by feature area, flagging the assumptions that will move the number. That gives a buyer an independent reference point before they engage a vendor or evaluate an agency quote. Not a final contract. A structured starting point with visible logic.

If you've been quoted a number for an AI agent with no breakdown of what drives it, that's the gap the estimator is built for.

AI agents in 2026 are genuinely capable of things that weren't possible two years ago. But the gap between a demo and a production system is still significant, and the gap between a vendor's description and what they actually deliver is still wider than it should be.

Across first-time AI builds, the failure mode we see most often is not a technology problem. It's a scoping problem. The buyer wanted a Tier 2 orchestrated agent. The vendor quoted something that looked like Tier 1 and quietly expanded scope after kick-off. The change orders followed.

The fix is not more AI literacy. It's better procurement. Knowing which tier you need, what drives cost in that tier, and what a reasonable range looks like, before you sign anything.

Related reading: Custom AI Development: What It Costs, How It Works, and How to Avoid Getting Burned, Why do AI projects fail?

Sources

RAND Corporation. (2024). The Root Causes of Failure for Artificial Intelligence Projects and How They Can Succeed. https://www.rand.org/pubs/research_reports/RRA2680-1.html