March 10, 2026

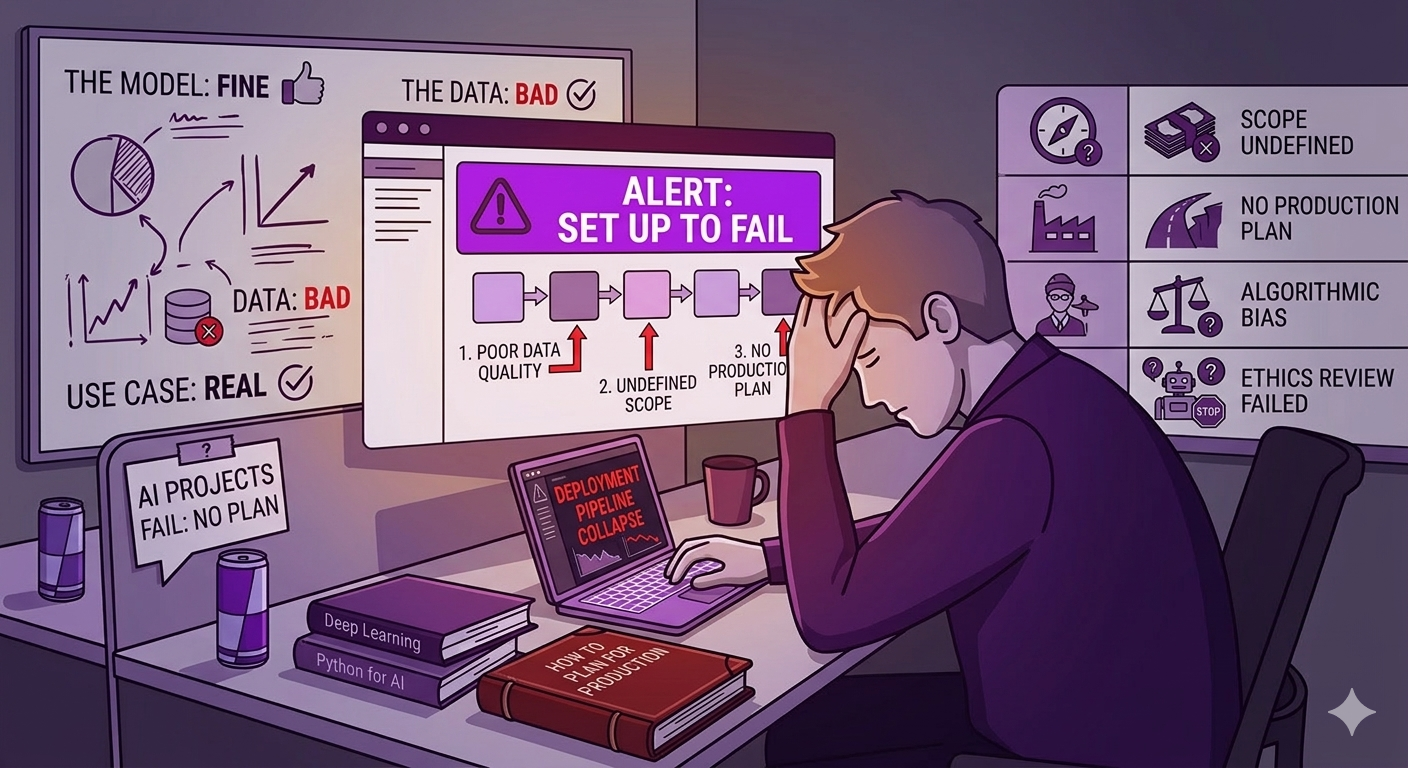

The failure statistics for AI initiatives are striking, but the headline figures can mislead. They tend to obscure what actually went wrong.

Gartner predicts that at least 30% of generative AI projects will be abandoned after proof of concept, citing poor data quality, inadequate risk controls, escalating costs, and unclear business value. That's the abandonment rate after a working prototype exists. It doesn't count projects that never made it that far.

BCG's research across 1,000 C-level executives found that only 26% of companies generate tangible value from AI. The other 74% struggle to achieve meaningful scale. McKinsey's 2024 State of AI survey found that while 78% of organizations now use AI in at least one business function, only 17% report that 5% or more of their EBIT comes from it.

The pattern across all of this research is the same: adoption is high, value realization is low. Organizations are running experiments. Very few are running production systems that change business outcomes.

This is not a technology problem. It is a scoping, planning, and execution problem. And most of it is preventable.

The most common failure mode isn't technical. It's strategic. An AI initiative gets launched because a competitor announced something, or because an executive read a case study, or because the board asked why the company isn't "doing AI." The technology becomes the goal. The business outcome becomes secondary.

The anti-pattern looks like this: a team is tasked with "implementing AI" in a particular function. They pick a use case that seems tractable. They build something that technically works. Then nobody uses it, or the output isn't trusted, or the process it was meant to improve turns out to require changes nobody planned for.

The fix is to start with the outcome, not the technology. What decision does this change? What process does it replace or accelerate? What does "working" actually look like, and how will you measure it? If those questions don't have clear answers before scoping begins, the project is already at risk.

BCG's research captures this with what they call the 10-20-70 principle: AI success is roughly 10% algorithms, 20% data and technology, and 70% people, process, and organizational change. Leaders who win fundamentally redesign workflows around the technology. Those who fail try to automate existing broken processes and wonder why the output is broken too.

Data quality is the single most cited cause of AI project failure across every major research body that has studied the question. It is also the most consistently underestimated risk at the start of a project.

Gartner's 2024 survey of 1,203 data management leaders found that 63% of organizations either don't have, or aren't sure they have, the right data management practices for AI. Gartner predicts that through 2026, organizations will abandon 60% of AI projects that lack AI-ready data. That's not a prediction about technology. It's a prediction about preparation.

The specific failure pattern: a team builds a model using a clean, curated dataset assembled for the purpose of the pilot. The pilot works well. They move to production, where the data is live, messy, inconsistently labeled, and structured differently than it was in the test environment. The model degrades. The output loses trust. The project stalls.

AI-ready data is not the same as data that exists. It means data that is accurate, consistently structured, appropriately labeled, and governed in a way that can scale. Most enterprise data environments were not built with AI in mind. Closing that gap is real work, and it typically takes longer than the model development itself.

The fix is a data audit before scoping is complete, not after. If you can't answer what data the model will be trained on, where it lives, who owns it, how current it is, and what happens when it changes, you are not ready to build.

AI projects are particularly vulnerable to scope creep because the technology creates genuine curiosity. Once a working prototype exists, stakeholders start asking what else it could do. Each addition seems incremental. Together, they transform a focused tool into a platform nobody agreed to fund.

The moment things usually break is not planning. It's the first demo. A working demo generates enthusiasm, and enthusiasm generates requests. "Could it also do X?" is a reasonable question. The problem is when the answer is always yes, or when nobody is tracking what yes costs.

The other version of this failure is ambiguity that was present from the start but never resolved. Requirements described as outcomes ("make our customer service faster") without being translated into specific system behaviors. A scope document that reads more like a vision statement than a build plan. Assumptions that both sides made but neither side said out loud.

The fix is forcing specificity before kick-off. What are the exact inputs? What are the exact outputs? What does the system do when the input is ambiguous or incomplete? What is explicitly out of scope? A scope that can't answer these questions will generate change orders. It's a question of when, not whether.

Deploying a large language model or a machine learning pipeline into a production environment is not the same as building a traditional software application. The failure modes are different. The testing requirements are different. The maintenance requirements are different.

A team experienced in web or enterprise application development can build the infrastructure around an AI system. What they often can't do reliably is anticipate the specific ways AI systems degrade in production: model drift, hallucination in edge cases, latency under real load, behavior changes when input data shifts. These require engineers who have seen these failure modes before and know how to build the guardrails, monitoring, and evaluation systems that catch them.

The talent gap is real. Current research finds that 34 to 53% of organizations with mature AI implementations cite a lack of AI-native skills as their primary obstacle. Data scientists are expected to operate as full-stack engineers, mastering DevOps, model frameworks, and security practices simultaneously. That combination remains rare.

The practical implication: when evaluating a vendor or team for an AI build, the question is not whether they have built software before. It is whether they have deployed AI into production and maintained it past the first three months. Ask for specific examples. Ask what broke and how they caught it.

Deploying an AI system is not the end of the project. In some ways it is the beginning of a more demanding phase.

Models drift. The world the model was trained on changes, and the model doesn't automatically update with it. A customer service model trained on 2023 data will start producing stale or wrong outputs as products, policies, and customer language evolve. Without a monitoring and retraining plan, that degradation happens silently.

User trust erodes. When an AI system produces a bad output, and it will, someone has to own the response. Was it the data? The prompt? The model? A change in inputs upstream? Organizations that haven't built feedback loops and escalation paths into the system discover these questions only after confidence has already been damaged.

Integration dependencies accumulate. An AI system that connects to a CRM, a data warehouse, and an ERP becomes dependent on all of them. When any of those systems update, the AI behavior can change in ways that aren't immediately visible. Maintenance requires ongoing attention, not a post-launch handoff.

The fix is treating the post-deployment roadmap as a deliverable, not an afterthought. Before a project closes, you should have defined: who monitors model performance, what triggers a retraining cycle, how users report bad outputs, and what the escalation path looks like. If the vendor doesn't raise these questions, raise them yourself.

The organizations that consistently generate value from AI share a small set of behaviors. They are not the organizations with the biggest budgets or the most advanced technology.

They define the business outcome before selecting the technology. The question is not "what can we do with AI?" It is "what specific outcome are we trying to change, and is AI the right tool?"

They treat data readiness as a prerequisite, not a parallel workstream. The build doesn't start until the data questions are answered.

They scope tightly and resist expansion. A focused tool that works reliably is worth more than an ambitious platform that partially works.

They staff with engineers who have shipped AI to production, not just built prototypes.

They plan for production from the beginning. Monitoring, retraining, user feedback, and maintenance are part of the scope, not additions to it.

None of this is especially complicated. Most of it is discipline applied earlier than feels necessary at the time.

Most of these failure modes share a common origin: insufficient clarity at the start. Scope that wasn't specific. Data that wasn't audited. Outcomes that weren't defined. Assumptions that weren't surfaced.

This is where structured scoping pays its way. Not as a formality, and not as a discovery phase that restates what you already said. As the process of forcing every open question into the light before any money is committed to the build.

The Fraction project scoping tool is built for this gap. It takes your AI initiative brief and returns a structured breakdown by component: what's being built, what data it depends on, what the integration points are, where the assumptions are soft, and what the delivery risk looks like before you've signed anything. You're not getting a final quote. You're getting the questions you need answered before you can evaluate one.

That's the work that separates the 26% from the 74%. It happens before the first line of code is written.

AI projects fail for predictable reasons. A business case that was never specific. Data that wasn't ready. Scope that drifted. Engineers who hadn't shipped this before. No plan for what happens after the demo.

None of these are technology problems. They are process problems. And they are almost always detectable before the project starts, if someone asks the right questions.

The organizations that get value from AI are not luckier or better resourced than those that don't. They are more deliberate about what they build, why they build it, and what it will take to keep it working. That discipline is available to any organization willing to apply it before kick-off, not after the first change order.

Related: How to scope a custom AI development project. What an AI readiness assessment actually measures. How to evaluate an AI vendor's delivery track record.

Sources

Gartner. "Lack of AI-Ready Data Puts AI Projects at Risk." Gartner Newsroom, February 2025.

BCG. "AI Adoption in 2024: 74% of Companies Struggle to Achieve and Scale Value." Boston Consulting Group, October 2024.